Top 5 Potential Pitfalls In Google Analytics (and how to avoid them)

With 326 metrics and 338 ways to slice them, Google Analytics (or G.A.) is a complicated beast. It’s powerful enough to keep track of your online presence, but if you’re using it for analysis there are some hidden traps that could trip you up if you’re not careful. After seeing these happen (and making a few myself) I’ve put together five of the things that are most likely to confuse your analysis and skew your results:

Sampling

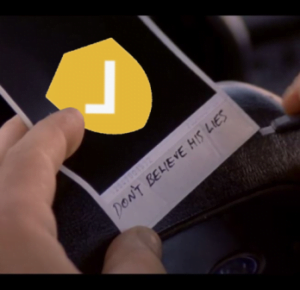

See this little green shield? If it’s ever yellow, that means the data on the page is sampled. Sampling is how G.A. keeps your dashboards fast and reactive despite the masses and masses of data in the backend. In other words, it saves them time and effort. Given 1 million rows of data, G.A. will only take a percentage of that and assume that’s representative of all the rows.

Vs.

As you can guess, 0.1% of users is not representative of your entire user base.

How do you get around this? At Propellernet, we’ve created a custom system which pulls data at the smallest granularity possible. If G.A. doesn’t hit the scale it needs to sample data, you can still achieve the same functionality from Supermetrics and Google’s G.A. 360. However, at the most basic level, we’d recommend just keeping an eye on that shield – if it’s yellow, then be aware.

Needing to match with external sources

If you’re comparing your Facebook results with your G.A. expecting them to perfectly match up, you’re going to have a bad time… and let’s not even talk about how G.A. mangles Facebook campaign names. The same goes for comparing the number of clicks you get on your Adwords ads and the number of sessions that result from those ads.

One reason for this could be that users drop off AFTER they’ve clicked the ad but BEFORE the landing page has fully loaded. Another is that users may have a different browser cookie setting. In fact, there are hundreds of reasons why Google Analytics data won’t match an external source and unfortunately, it is inevitable that there will be differences.

Each platform has slightly different metrics because they aren’t measuring the exact same thing. When reporting at Propellernet, we talk about having a “single source of truth”, this means knowing whether you’re trusting Adwords, G.A. or Facebook, accepting that each has a different philosophy of measurement and sticking with that one source.

Summing users

This has caught me out when I was an analytic padawan; it’s sneaky and so easy to miss.

It’s easy to think that, when looking at the total users for a period, you can just chuck a sum() over it and have done. Not true. Because ‘users’ actually refers to the unique users (unique daily users in this case), if you sum you’re assuming that those 20 users on Monday have no overlap with those users on Tuesday. Keep the idea of unique users in your head and you’ll be fine. G.A. does a great job of keeping track of this in its interface, but if you’re exporting the data either with the export tool or with Supermetrics, you’ll need to be vigilant to avoid this error.

Averaging averages

In the same vein, you could be forgiven for assuming that the easiest way to work out the bounce rate for this period is to chuck an average() over it and have done.

Not the worst mistake ever but it’s pretty bad. The problem comes from the fact that each bounce rate has a different weighting depending on the number of sessions. In the example above, Thursday should be far more important than Tuesday BUT if you average it then you’re saying they have the same importance, despite Thursday having more sessions.

You can minimise your chances of making this error by working in raw metrics as much as possible before aggregating at the end of your analysis. You can do this by exporting the raw number of bounces and sessions and then once you’ve summed it into the right date range THEN you can =bounces/sessions and you’ll be right as rain.

Using the wrong segment

My final point is more of a user error than a mathsy pitfall but it’s one that’s easy to miss and will royally mess your numbers up. Segments are a great way to only analyse a specific segment of your audience, but they will hang around after you close G.A. – when you log back in, you might be excluding a large segment simply because the last person in the G.A. account selected a specific segment.

When you’re using G.A., please please please check your segment.

It’s so easy to forget that the last person using an account was using a PPC filter – and you wonder why your organic traffic is zero!

Just keep an eye on it and you’ll be fine. Check your segments every time you load G.A to make sure all the settings are as you’d want them to be.

Unfortunately, avoiding these almost-hidden pitfalls (and there are a bunch more – too many to cover in this one piece) is often down to a matter of experience. Once you’ve made these mistakes and spotted them, you won’t be making them again… I’d hope! Have someone experienced check over your workings and, if you’re working in a spreadsheet, check your results against the online G.A. interface… Either that or get in touch and let us do your analysis for you!